At the moment it's offline so I'm processing the images on a mac.

However there are smaller embedded systems that will have the capability to process data (at a slower rate). My thinking is possibly:

C2 Odroid 64bit (2GB) performing the control, focus etc but then cross mount the images to stream over to a second system vs wifi which then takes the feed and processes the images. The difference is it's running on a dedicated embedded system (12V).

There's a couple of deep learning ideas I want to try - including noise removal and processing. The idea is that you can see both the raw images but also the processed ones.

I've done a lot of OpenCL too so I may be tempted to make a GPU library for the pipeline but as always GPU works best with it's own memory and you need a couple of GB to keep the images in memory or do FFT etc.

Read More...

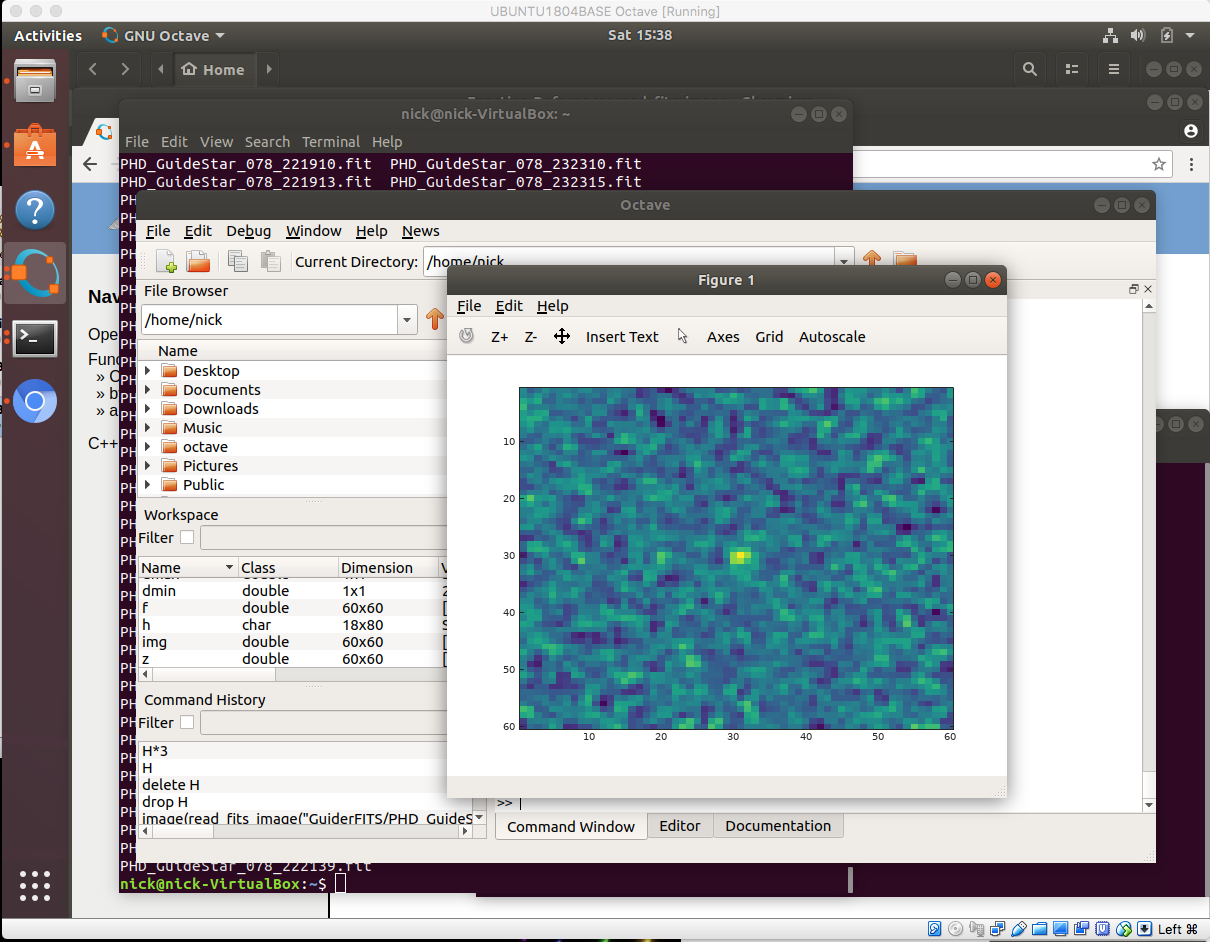

I've started playing with Octave ![]()

My thinking here is more around the processing but looking good so far

Read More...

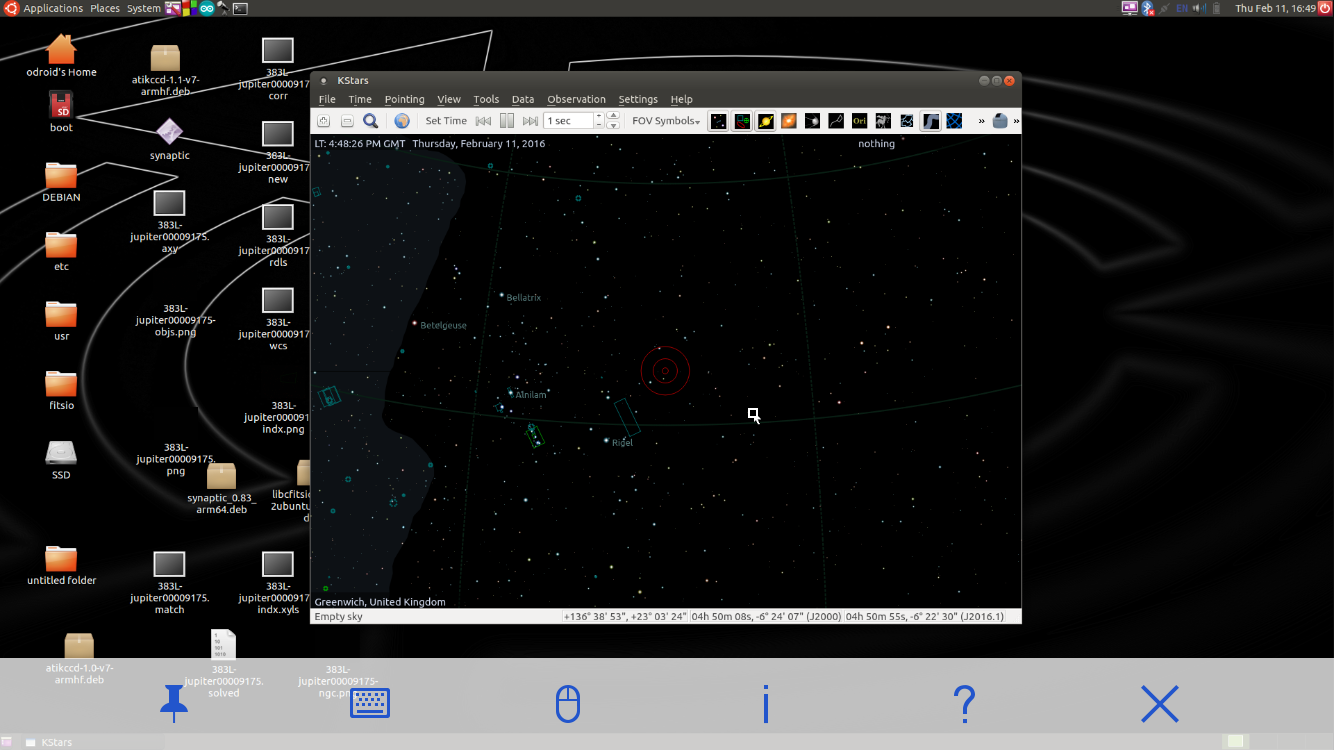

I run a 64 bit version of INDI (compiled myself) and use the 32bit version of the drivers.

It's possible ![]()

Read More...

So one issue with the 64bit version and the latest "numpty" is that the issue with the astrometry script and the rule changes in numpty about dynamic casting:

TypeError: Cannot cast ufunc add output from dtype('int32') to dtype('uint16') with casting rule 'same_kind'So saving in a PNG for the tests was the initial work around but it looks like this can be done:

stackoverflow.com/questions/14269164/why...-computers-in-python

It appears that according to that comment that the change was reverted.. so perhaps a python/numpty update will sort it out. It looks like ".astype()" needs using in the script.

Read More...

OpenPHD2 can store guider frames (the raw FITS) for the entire run.

Not many people use this perhaps, however I use it to create the point spread function for each long exposure frame.

I've had a look but couldn't see how todo this on Ekos.. can you?

Read More...

Just got a new iPhone for my birthday (old one was 2009 3GS!).. VNC client app and hey presto!

Read More...

djibb wrote:

Having 64 and 32 binaries of everything gets complicated and bloated.. so 64bit for everything.

djibb wrote:

djibb wrote:

I create a shell terminal session, then set FD_LIBRARY_PATH to add the new 32bit cfitsio location and then execute the indiserver on the command line - actually I do this with all my drivers.

When indiserver executes it runs as process and starts the other drivers as a process with just a socket connection between to communicate. This means that the indiserver and other drivers can be 64bit .. and other drivers can be 32bit without needing all to be one or the other.

Lastly - you may find that, on 64 bit, the astrometry.net solver python script incorrectly identifies the precision in the FITS image file - the is because it's attempting to dynamically identify the type at runtime and gets it wrong (it seems the type identification differs between 32 and 64bit python - bad programming!). The simple way to solve this is to save in a PNG and the problem is solved

Read More...