Hi,

I set up a couple of scripts to drive the SNAP port of the AZ-GTi in order to fire a DSLR using the CCD Simulator: A "SNAP ON" script is called on Pre-Capture and a "SNAP OFF" script on Post-Capture. It works perfectly when exposing a sequence.

However, only the Pre-Capture script is called when doing a preview exposure. The same happens when framing (looping). Likewise, the Post-Capture script is not called when an exposure is aborted (either during a sequence or when aborting a preview or framing sequence).

Right now, I have to call the "SNAP OFF" script manually from a terminal window whenever any of the above happens (pressing the "stop" button would be so much easier ![]() .

.

Is there a reason for this behavior? Is there a simple way to modify that?

Best,

Ricardo

Read More...

Hi everyone,

Just want to share my experience using an x-trans-sensor-based Fujifilm camera with ekos. After being on the edge of throwing everything away, I succeeded in finding out the cause of some of the problems that others have also reported in previous threads (for instance Timothy in

this post

by David). In particular re the slow "transfer" that several have experienced when trying to use the raw format.

The problem is kstars FITS image viewer, not the driver (nor gphotolib). In the raspberry pi, the viewer takes over a minute to deal with the x-trans color pattern in the RAF file. The behavior reported in the previous posts has NOTHING to do with storing the files locally or remotely. That is rather an apparent consequence of what the capture module in ekos does when storing images locally. I'll explain the cause of the confusion.

But first, to give some context, I'm using kstars 3.5.4, with the latest (1.9.3 ?) Indi library, on a raspberry pi 4 with 4 GB of ram. My camera is a Fujifilm x-pro2, 24 MP x-trans III sensor. All software runs on the raspberry. I either connect a monitor+keyboard+mouse to it, or connect remotely to it using VNC. The behavior I'll describe does not depend on how I access the pi.

As described in other posts, the camera has to be configured for "USB tether shooting auto", exposure set to "B", and, in my case, shutter mode set to "Mechanical + Electronic" (the x-pro2 cannot do longer than 1 second exposures in electronic shutter, unfortunately). The camera must be ON before plugging the cable that connects it to the raspberry. The driver can then connect properly. Once connected, no physical dial or button on the camera can be used (no response from the camera), and trying to use them seems to break the communication between the camera and the raspberry.

I was using the Indi Fuji driver initially, but now I'm using the generic gphoto CCD driver; they behave the same. In the driver control panel, the sensor pixel count and size are set as per technical specs found on the web. The only other relevant setting is "Force bulb mode": OFF. As mentioned by others, the images are never stored on the camera SD card (regardless of the "SD Image" setting selected, i.e. SAVE or DELETE). This might be by Fujifilm design (seems to depend on camera model).

So, here is what I've learnt:

On the driver control panel, setting the parameter "Upload" to CLIENT sends the captured image to ekos to deal with it. Conversely, when setting it to LOCAL, the driver stores the image in the indicated folder. When set to CLIENT, ekos calls the FITS viewer to display the received image. For reasons unknown to me, the time it takes the FITS viewer to display the image is included in the image download time shown to the user. If the driver uploads the image in FITS or JPEG format, the viewer displays it fairly quickly. However, if the image is uploaded in RAF format, it takes the viewer running on the raspberry more than a minute to display it. This is despite the image being saved on the raspberry SD card within a second of finishing the exposure. Surprisingly, the whole of kstars hangs while the FITS viewer is processing the file (!).

I discovered this behavior looking at the contents of the folder where images were stored, while commanding exposures directly from the Main Control tab of the driver control panel. The image file is saved (and is readable) almost immediately after finishing the exposure, regardless of setting "Upload" to CLIENT or LOCAL.

The good news is: shooting RAF from ekos running on the raspberry pi can be as fast as shooting JPEG or FITS, if images are only stored in and not displayed by the raspberry.

Now, when commanding exposures from ekos capture module, the behavior depends on the ekos "Upload" setting, which is not the same as the driver "Upload" setting. In ekos capture module, LOCAL means "ekos deals with the image", and REMOTELY means "ekos only saves it". So, to avoid ekos from displaying the images, ekos should be set to upload REMOTELY (which doesn't mean a local folder can't be specified anyway). (Note: I tried unchecking the "display DSLR images" box in kstars settings / ekos tab, but it didn't work, beats me...).

However - and this was for me the source of confusion - the behavior of ekos also changes if the image is acquired as a "preview" or in a sequence: Even if the driver is set to upload LOCAL and ekos is set to upload REMOTELY, when taking a "preview" exposure, ekos changes the driver setting to CLIENT to be able to display the image and then resets it to LOCAL. Conversely, when exposing a sequence, the Upload setting in the driver remains unchanged, and the whole sequence is acquired without delays (good!).

I think this explains why Dave experienced no delay when shooting raw as opposed to other (for instance Spencer in the

referred thread

): When using the raspberry as an Indi server, there is no instance of ekos running on the raspberry and trying to display the uploaded images, so: no delay. AS described in the other thread, Dave was using ekos on a laptop, and selected Upload REMOTELY (so images remained on the pi). He and others attributed the delay when selecting LOCAL on the laptop to the ethernet transfer speed, but it might as well mean that also on the laptop the FITS viewer crawls when "debayering" the RAF files.

Moving forward, even if these observations showed how to shoot raw from the raspberry without delay, the current conditions to achieve that are far from ideal.

First, not being able to make previews is not ideal. Of course, one can select to transfer the images as FITS, or shoot as JPEG and then switch to raw for the sequence... But then there is no way to check how the sequence is going (no HFR measurement, etc.).

Second, the camera being completely unresponsive after connecting it to the raspberry is also annoying. Combined with the slow display of RAF files, this means that either all framing and focus should be done before connecting or the camera or by using jpeg/FITS initially and then switching to raw (this makes me think a shutter release might be a better option...but then there is no chance to do platesolving... argh). The camera body also seems to heat-up a lot during the tethered session (but I'll have to test this further).

Both these issues would be resolved if the FITS viewer would have a "quick and dirty" option for RAF debayering that works fast on the raspberry even if not producing the best output quality (could even treat it as a grayscale image, not important for the practical uses within ekos...). Please, please, please? smile.png

Well, long post. I hope it helps other Fuji users out there. I'll try working with what's available for now and I'll report later.

I might post also about the FITS viewer stopping kstars in general. I'm surprised by that behavior, I wonder if it is not also the source of other problems (is that affecting guiding too? even when a big image from an astro-camera is being "downloaded"?).

Best regards and clear skies,

Ricardo

Read More...

Just an update on the asteroid hunting tests...

I made some changes to the image comparison script (the new version has been updated in my original post). Now the candidate animated images are stamped with the dates/times extracted from the FITS files. Also made some other minor changes that should make it more "sensitive", I prefer having false-positives than overlooking a possible find.

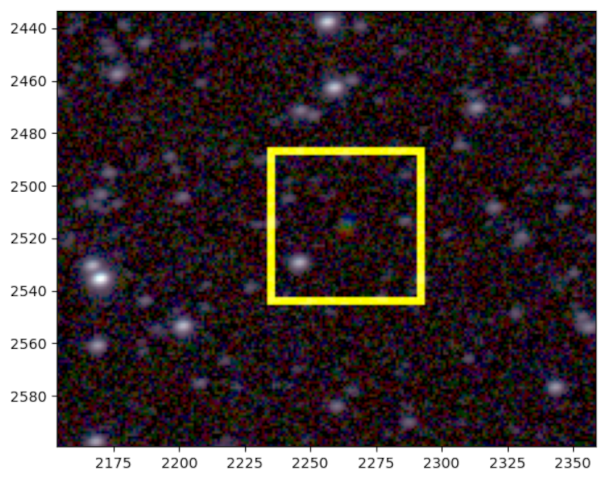

Here a couple of animations from the last session:

(790) Pretoria, 15.01 magnitude.

(663) Gerlinde, 16.52 magnitude.

(Magnitude info is from Kstars).

Clear skies,

Ricardo

Read More...

Thanks for your reply Ronald. How did you find the asteroids in the image? When I stack many subs, the rejection usually gets rid of transient phenomena...

Also thanks for the tips on the Raspberry. I do too use a model 4, but with 4GB only. I don't think I have memory issues, though. I connect to it using VNC and by how slow it is I think I it might be draining resources too much. I also think I might have some hardware problems, with the mount in particular (HEQ5-Pro). About a month ago the mount led started blinking randomly (not like when power hungry, but rather as if the power connector was loose), then the whole system crashed (Raspberry going offline and not comming back). And near the end of my last imaging session (two nights ago) suddenly was not able to guide (as if it had stopped tracking altogether) magically recovering the next minute... Might be the winter temperatures approaching.

Regardless, I'll have a look at my OS as you suggest. I'm curious now to see if it might help with other minor issues.

Ricardo

Read More...

Hi everyone,

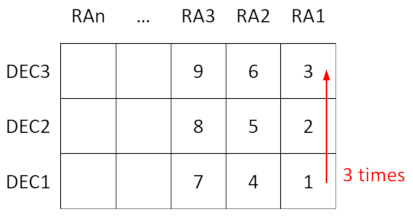

I was curious if the automation already available in Ekos could be put to use to search for asteroids. The idea was to use the scheduler to run a capture sequence on the same area of the sky three times at fixed intervals. Captured sequences could then be stacked to produced three images to compare to see if something had changed position between them. Additional fields could be imaged during the intervals, in a more or less continuous process. A swath of the sky could be scanned moving in RA direction, imaging several fields at different DEC at each RA before moving to the next RA, like this:

I started trying to manually setup a job schedule to do this, but after a while it was obvious that it was not reasonable. I have now written a Python script that creates the scheduler file (.esl) to scan a given number of RA "columns" and DEC "rows" starting from a given RA and DEC (lower right corner in the schema). It considers the field of the camera-telescope combination and has parameters for the desired overlap between images. The scheduled jobs execute a capture sequence that is be predefined in Ekos. Each field in a RA column is visited three times following the order: DEC1, DEC2 ... DECn, DEC1, DEC2, ...DECn, DEC1, DEC2, ...DECn, and then the telescope moves to the next RA. Focusing is called once per RA column, in the first field (at DEC1). I'm attaching it as a .txt file to this post (needs to be changed to .py), in case someone wants to try it. As a result, 3 folders are created for each field (named e.g. Field10, Field10b, and Field10c).

I wrote a SiriL script to process and stack the captures from each field and to align the resulting 3 images for comparison.

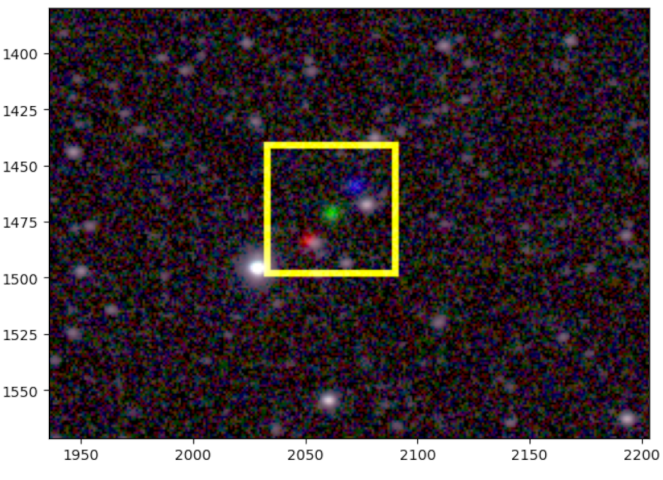

Finding moving points of light in the images "by eye" proved absurdly difficult, so I wrote a script in Python that compares the images and presents the findings in a visual way. This is the output from a couple of known asteroids:

That is (391) Ingeborg, a fairly fast moving asteroid of about 15.6 mag. Images were obtained with a 115mm f/5.6 refractor equipped with an ASI1600mm-pro camera (each image: 3x 60 sec Lum subs). The animated GIF is saved by the python script. The color image is also output by the script (each image is a color channel, showing moving objects as aligned R, G, and B dots).

This is another one, about magnitude 17.75 (can't remember the name now, will update info later). It shows the script does a good job at finding them.

I am attaching the image comparison script here too as a .txt file, for anyone to play with. Just be aware that I'm not a programmer (so my code is probably laughable at some parts...tongue.png).

Things that don't work well yet:

useless rant --> 1. I run INDI/Ekos/Kstars on a Raspberry Pi 4 at the telescope: There has not been a single night where something fails (if it's not a general crash, it's the solver taking forever, or the guider failing in some way pinch.png).

2. I haven't found a way to make the scheduler wait for the guider to stabilize before starting the capture sequence. Clearly, after the alignment procedure, waiting some seconds seems to help. I think changing the capture sequence to 6x 30sec LUM subs night help with this.

3. Did I say I use a Raspberry Pi? The scheduler takes some time to load the .esl file. I wouldn't dare include more than 6 fields in the file (6 fields are 18 jobs...).

I'm looking forward to knowing if someone else is trying something similar and what has been your approach. It would be nice sharing ideas.

Already a very long post. I hope it didn't come out to complicated because of my bad English.

Clear Skies,

Ricardo

Read More...

Basic Information

-

Gender

Male -

Birthdate

29. 04. 1973 -

About me

Intermitently into astrophotography... when life allows it

Contact Information

-

State

Schweiz -

City / Town

Fribourg -

Country

Switzerland